How to Track and Improve AI Agent Mentions

If you are asking yourself How to track and improve AI agent mentions?, you are already ahead of the curve. Large language model powered assistants and artificial intelligence search surfaces are shaping how people discover brands, evaluate options, and click through to websites. Yet most teams lack a reliable way to monitor mentions, diagnose gaps, and engineer improvements across conversational responses and summarized answers. This guide gives you a practical system to identify where you stand today, implement changes that increase your presence, and measure the business impact with confidence.

Prerequisites and Tools

Before you start, align on what counts as a mention, how you will test, and what evidence you need to prove progress. Think of it like building a wind tunnel for your brand: controlled inputs, observable outputs, and repeatable experiments. You will need access to the major assistants and overviews, the right instrumentation to capture evidence and traffic, and workflows to quickly ship improvements. If you already manage search engine optimization for your site, you likely have many of these tools and processes in place; the key is adapting them to the way large language model answers are generated and cited.

- Access to major assistants and AI search: ChatGPT, Gemini, Perplexity, and Bard/SGE, plus overview panels where available.

- Testing prompts that mirror real user tasks, intents, and buyer stages.

- A content management system connection and analytics with tagged links for attribution.

- Structured data tooling for JSON-LD (JavaScript Object Notation for Linked Data) and schema markup.

- Topic cluster maps and internal linking plans to improve topical authority.

- Brand entity definitions, canonical names, and short factual summaries to reduce ambiguity.

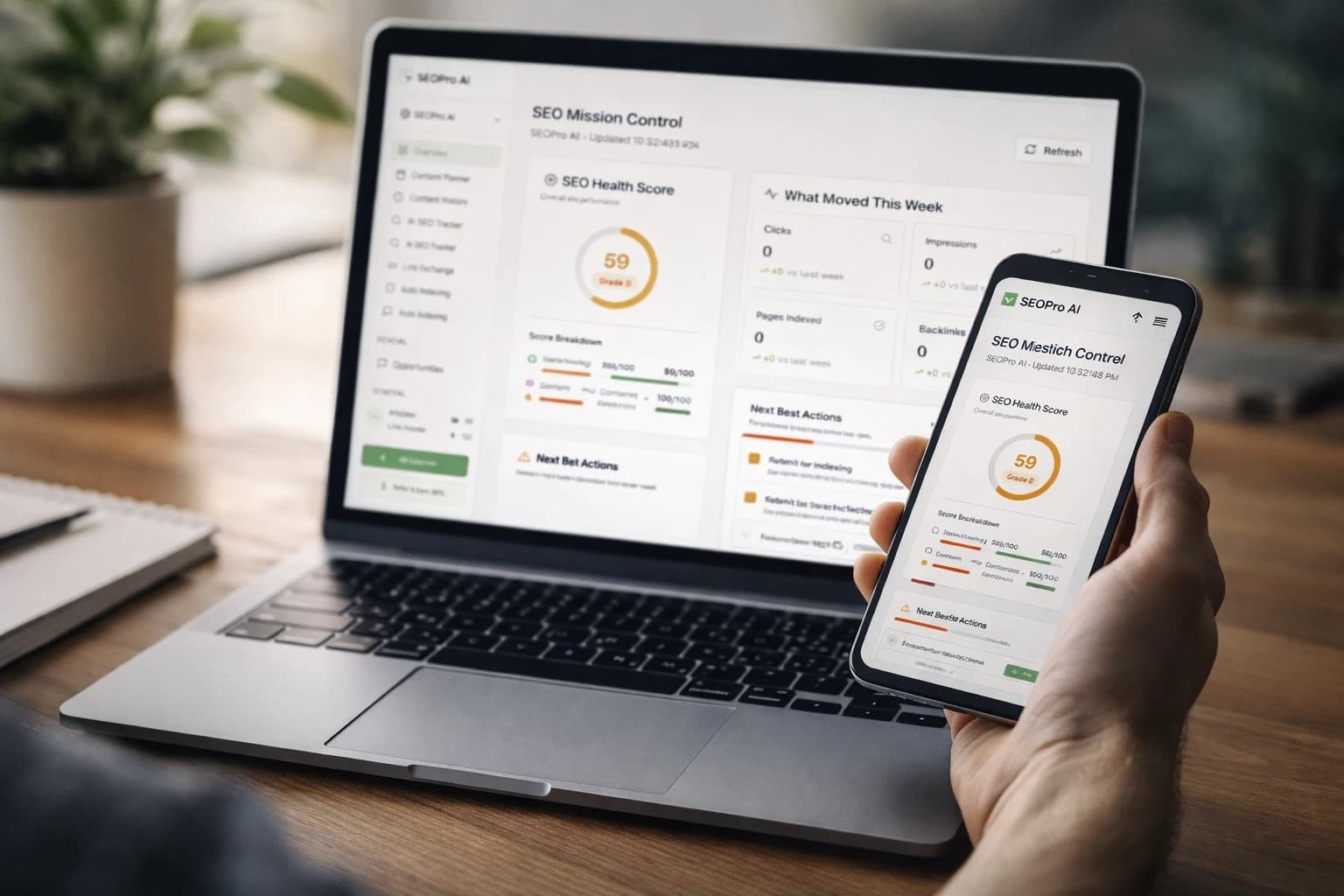

- SEOPro AI (platform) for end-to-end execution: AI Blog Writer and LLM SEO tools for automated content creation and semantic optimization; a Hidden‑Prompt / AI‑Answer Optimization module that auto-inserts short, citation-friendly snippets to improve brand mentions; automated publishing and CMS connectors for WordPress, Shopify, and Webflow (scheduling, sitemap updates, and optional auto-indexing add‑on); a Monitoring & Analytics dashboard (AI Mention Share, Prompt Resonance Score, entity coverage, and AI‑citation tracking); backlink and link‑exchange features (backlink credits/network available as an add‑on); plus playbooks, schema guidance, and audit resources.

| Source | Where Mentions Appear | How to Capture | Notes |

|---|---|---|---|

| ChatGPT | Conversational answers, suggested resources, citations, link cards | Use shareable links, screenshots, and tracked URLs with parameters | Test multiple phrasings and follow-up questions |

| Gemini | Answer panel, supporting links, suggested actions | Record outputs, copy linked sources, log variations | Location and history can affect results |

| Bard/SGE | Answers with web citations, overview panels, and suggested sources | Export or capture session links and citations | Test in browser and assistant interfaces |

| Perplexity | Answer synthesis, citations list, related follow-ups | Copy share links, catalog sources and positions | Great for observing source selection behavior |

| Overview panels | Overview panel with web links and source tiles | SERP screenshots and logged link clicks | Availability varies by country and query class |

Step 1: Define AI Agent Mention Types and Success Criteria

Start by defining exactly what you mean by an artificial intelligence agent mention, because not all surface appearances carry equal value. A direct brand name in the body of an answer is excellent, but a linked citation may be even more valuable if it is prominent and descriptive. For some use cases, being selected in a side-by-side comparison matters more than a casual text mention, and for other cases, getting recommended in a how-to sequence is the highest-impact outcome. Clarity on definitions will help you score results and choose what to improve first.

Watch This Helpful Video

To help you better understand How to track and improve AI agent mentions?, we've included this informative video from Ahrefs. It provides valuable insights and visual demonstrations that complement the written content.

- Direct textual mention of your brand or product within the generated answer.

- Prominent citation link to your page in the answer’s source list or link cards.

- Inclusion in recommended options, tools, or vendor shortlists.

- Positive summarization of your product advantages or key differentiators.

- Appearance in overview panels with a visible source tile and on-screen description.

| Mention Type | Example | Typical Impact |

|---|---|---|

| Direct Text | Assistant says: "Use SEOPro AI (artificial intelligence) for automated content." | High brand recall, medium click likelihood |

| Primary Citation | Your guide linked as the first or second source | High click likelihood, strong authority signal |

| Recommended Option | Listed among top tools or vendors | Medium recall, medium click likelihood |

| Overview Tile | Source tile in an overview panel | High visibility, variable click rate |

Step 2: Build a Repeatable Testing Framework and Baseline

Treat artificial intelligence assistant evaluation like a structured research study, not ad hoc searching. Assemble a test matrix of representative tasks spanning informational, comparison, and transactional intents across your priority topics. For each task, write standard prompts and two to three variants, along with expected correct answers or must-have facts. Then schedule tests weekly or biweekly, and run them in clean sessions to reduce personalization. This disciplined approach turns fuzzy observations into quantifiable baselines you can confidently improve.

- Create a query set that covers buyer journey stages: awareness, consideration, decision, and post-purchase.

- Document prompt phrasing, location, device, and any settings used for consistency.

- Decide scoring rules: credit for direct mentions, citations, and shortlist placements.

- Tag any links you click from assistants with tracking parameters for attribution in analytics.

- Use SEOPro AI (artificial intelligence) playbooks to speed up matrix construction and set default tracking templates.

| Intent | Standard Prompt | Variants | Expected Facts |

|---|---|---|---|

| Informational | "How do I create a content cluster for technical topics?" | "Plan a topic cluster for developer tools", "Cluster keywords for API docs" | Definition, internal linking, pillar vs. spokes |

| Comparison | "Best platforms to automate search engine optimization content at scale" | "Top tools for automated blog creation", "Alternatives to manual content production" | Automation, content quality controls, publishing connectors |

| Transactional | "How to implement schema markup to win features" | "Add JSON-LD for how-to", "Guide to product structured data" | Schema types, validation, testing |

Step 3: Capture Evidence Across Assistants and Overviews

Now collect outputs consistently so you can analyze trends instead of chasing anecdotes. For each test item in your matrix, run the prompts in each assistant, capture the full answer, and record whether your brand appears as text, citation, or shortlist. Where assistants provide share links, store them in a log; otherwise, capture clean screenshots and copy cited uniform resource locator addresses. When you click through to your site from an answer, make sure you use tagged links so you can attribute sessions, engagement, and conversions to specific surfaces and prompts.

- Log the agent, date, region, prompt, answer text, and any cited sources with positions.

- Record whether your content is linked, and if so, which page received the link.

- Track follow-up prompts that increase or decrease the likelihood of a mention.

- Use SEOPro AI (artificial intelligence) to automate logging and to detect meaningful changes in mention share week over week.

| Source | Primary Evidence | Supplementary Signal | Recommended Frequency |

|---|---|---|---|

| ChatGPT | Shared conversation links and screenshots | Position of your link in citations | Weekly |

| Gemini | Answer capture and linked pages | Snippet text that describes your brand | Weekly |

| Bard/SGE | Answer text and expandable source list | Interface placement and link order | Weekly |

| Perplexity | Answer and citations export | Related questions where you also appear | Weekly |

| Overview panels | Overview panel screenshot | Which of your pages are selected | Twice per week |

Step 4: Diagnose the Reasons Behind Your Current Visibility

Once you have baseline data, investigate the why behind your current performance. Large language models choose sources that are clear, factual, well-structured, and contextually authoritative. If your content is thin, ambiguous, poorly cited, or scattered across unrelated pages, assistants will likely pick competitors. Similarly, if your brand entity is not well defined across authoritative profiles, knowledge bases, and structured data, you may be overlooked even when your content is strong.

- Entity clarity: ensure consistent brand and product names, and use Organization, Product, and SoftwareApplication schema.

- Structured data: add JSON-LD (JavaScript Object Notation for Linked Data) for HowTo, FAQ, and Article where relevant.

- Topical authority: build and connect a pillar page with comprehensive support articles.

- Evidence and citations: support claims with credible sources and original research.

- Experience, expertise, authoritativeness, and trustworthiness (E-E-A-T): add expert bios, dates, revision history, and policy pages.

- Technical discoverability: ensure crawlability, indexing, and clean canonicals.

SEOPro AI (artificial intelligence) accelerates diagnosis with semantic audits, internal linking recommendations, and schema markup guidance aligned to the queries in your test matrix. For example, a software brand that was absent from overviews for “enterprise content automation” used SEOPro AI topic clustering to consolidate scattered posts into a tight hub-and-spoke model, added HowTo and FAQ structured data, and embedded a short factual summary at the top of each hub page. Within three weeks, their content became a recurring citation across assistants, and they captured an overview tile in eligible search results.

Step 5: Implement Changes That Increase Mentions

Improvement tactics fall into four buckets: create or upgrade content, clarify entities and structure, strengthen signals of authority, and make your pages mention-friendly for generative answers. Your aim is to become the easiest, safest, and most compact source an assistant can use to answer a question truthfully and helpfully. That means surfacing concise facts near the top, labeling steps clearly, adding verifiable citations, and stitching related pages together so the model sees depth and consistency. You will also benefit from including neutral, balanced language that plays well in comparative contexts without sounding promotional.

- Fill content gaps with high-quality assets. Use SEOPro AI’s AI blog writer for automated content creation to produce pillar pages, comparison guides, and checklists that match your matrix.

- Embed short factual summaries and definitions at the top of key pages. These serve as model-friendly snippets assistants can quote or paraphrase.

- Add schema markup. Implement Organization, Product, SoftwareApplication, FAQ, and HowTo with validated JSON-LD (JavaScript Object Notation for Linked Data).

- Organize topic clusters. Connect pillars to supporting articles with descriptive internal links, and add breadcrumb navigation.

- Cite authoritative sources. Link to standards, research, and government resources that corroborate your claims.

- Publish comparison content that names competitors fairly. Balanced, evidence-based summaries are more likely to be used in assistant shortlists.

- Strengthen brand entity profiles across directories, social profiles, and knowledge bases to reduce ambiguity.

- Leverage SEOPro AI's Hidden‑Prompt module to auto‑insert short, citation‑friendly snippets into key pages. These structured cues surface key facts and disambiguate entities, increasing the likelihood of brand mentions in large language model answers.

- Deploy through content management system connectors for consistent publishing across websites and regions.

Pro tip: Include a model-friendly, at-a-glance block near the top of articles with 3 to 5 verifiable bullets and canonical names. Describe it in your source as a “Quick Facts” section so an assistant can detect and reuse it confidently, while your human readers benefit from the same clarity.

Step 6: Measure Impact and Iterate on How to track and improve AI agent mentions?

With improvements in place, return to your test matrix and score changes in visibility, prominence, and traffic. Track your share of mentions by assistant, the position of your links within citations, and the number of tasks where you show up as a recommended option. Then connect those signals to business outcomes such as qualified sessions, demo requests, or trial starts. You are building a feedback loop: prioritize topics, test systematically, implement optimizations, and double down on what moves the needle.

| Metric | What It Measures | Healthy Target | How to Track |

|---|---|---|---|

| Mention Share | Percent of tasks where your brand appears | 50 percent plus across priority tasks | Test matrix scoring logs |

| Citation Position | Average rank of your link in sources | Top 2 for key pages | Answer capture with link order |

| Overview Presence | Count of queries with an overview tile | Growing week over week | Search engine results page checks |

| Attributed Sessions | Visits from assistant-linked URLs | Consistent upward trend | Analytics with tracking parameters |

| Conversion Events | Demos, trials, or signups from these visits | Improving conversion rate | Goal tracking in analytics |

SEOPro AI (artificial intelligence) ties this together with a Monitoring & Analytics dashboard that tracks AI Mention Share, Prompt Resonance Score, entity coverage, and AI‑citation trends by assistant, query class, and page. The platform’s playbooks help you run controlled experiments, and its internal linking and schema recommendations let you ship fixes quickly. Over time, you will see a compounding effect: better entities and clusters earn more citations, which reinforce authority, which increases mentions across assistants and overviews.

Common Mistakes to Avoid

It is easy to waste effort if you optimize blindly or chase every new surface without a plan. Many teams jump straight into creating more content without first establishing a baseline, so they cannot tell what actually worked. Others overfit to one assistant or a single prompt variant, only to watch results evaporate when the model or interface updates. Use this checklist to stay on track and to protect your time and credibility.

- Skipping a definition of what counts as a mention and how you will score it.

- Optimizing only for one assistant instead of testing across multiple surfaces.

- Ignoring structured data and entity clarity, which are critical for selection.

- Publishing thin, unreferenced content that assistants cannot safely cite.

- Overusing brand-first language in comparisons instead of balanced, evidence-based summaries.

- Not adding authoritative citations, which reduces the chance of being selected as a source.

- Failing to tag links and attribute traffic, making it impossible to measure impact.

- Breaking terms of service with scraping; prefer share links, logged tests, and ethical data capture.

- Neglecting internal linking and topic clusters, which weakens topical authority.

| Mistake | Fix | SEOPro AI Capability |

|---|---|---|

| No baseline | Build a test matrix and run weekly checks | Playbooks and monitoring |

| Weak entities | Add Organization and Product schema, unify naming | Schema guidance |

| Thin content | Create pillar and comparison pages with citations | AI blog writer for automated content creation |

| Poor internal links | Implement a cluster map and descriptive anchors | Internal linking tools |

| No attribution | Tag assistant links and track conversions | Performance monitoring |

| Siloed publishing | Use CMS connectors to publish across sites | Content management system connectors |

Conclusion

Here is the core promise: a repeatable system lets you engineer visibility and turn assistant answers into a dependable channel. In the next 12 months, assistants and overviews will shape even more buyer journeys, favoring brands with crisp facts, structured data, and proven authority. How will you put this framework for How to track and improve AI agent mentions? to work on your highest-stakes topics starting this week?

Elevate AI Agent Mentions with SEOPro AI

Use our AI blog writer to publish clusters at scale, deploy Hidden‑Prompt snippets to improve AI attributions, connect to WordPress, Shopify, or Webflow with automated publishing connectors (scheduling, sitemaps, and optional auto‑indexing), and monitor AI visibility with metrics like AI Mention Share and Prompt Resonance.

Start Free Trial