How to Measure AI Search Visibility

Search is changing faster than your dashboards. In the space of a year, answer engines and conversational assistants have started mediating discovery, recommendation, and trust at scale, which means your brand must win in these new surfaces to grow. If you have wondered how to quantify ai search visibility across ChatGPT, Gemini, Google AI Overviews, and Perplexity, you are not alone. Many teams still track classic rank positions while missing whether assistants mention their brand, cite their pages, or recommend them at pivotal moments. By the end of this guide, you will have a clear, step-by-step method to measure visibility, understand what influences it, and connect it to pipeline and revenue outcomes.

Why does this matter now? Industry surveys suggest that more than half of buyers consult assistants weekly for research, product comparisons, and how-to tasks, while over a third say these answers strongly shape their shortlists. Traditional SEO (Search Engine Optimization) data is necessary but not sufficient when AI (Artificial Intelligence) systems synthesize sources, rewrite answers, and sometimes omit brands entirely. You need a framework that tracks brand mentions, citations, and share of voice within AI (Artificial Intelligence) answers, then ties those signals to traffic and conversions. This guide blends proven measurement fundamentals with new practices drawn from LLM (Large Language Model) behavior. Along the way, you will see where SEOPro AI can automate collection, embed hidden prompts, publish faster via CMS (Content Management System) connectors, and monitor for LLM (Large Language Model) drift so you can act before revenue slips.

Prerequisites and Tools

You do not need a large data science team to get started, but you do need clarity, instrumentation, and a manageable prompt set. Before you measure, align stakeholders on why AI (Artificial Intelligence) visibility matters, what good looks like for your organization, and how decisions will be made from the data. Then assemble a minimal, dependable toolkit so you can collect signals consistently, repeat experiments, and compare periods and competitors with statistical discipline.

- Business objectives and guardrails: Define target segments, use cases, and markets. Agree on acceptable optimization practices and disclosure standards, especially when using hidden prompts for LLM (Large Language Model) mention triggers.

- Prompt panel: A curated list of 100 to 300 prompts that represent your priority journeys across the funnel. Include informational, comparative, and transactional intents.

- Evaluation environment: Either a manual testing routine with time-boxed scripts or an automated runner. SEOPro AI provides an evaluation harness with LLM (Large Language Model) SEO (Search Engine Optimization) tools for ChatGPT, Gemini, and Perplexity.

- Analytics and logs: A web analytics platform, server logs, and events to segment AI (Artificial Intelligence)-sourced visits, outbound assistant shares, and copy-link behaviors.

- Publishing capabilities: A CMS (Content Management System) with structured content models; schema support; and a process for internal linking. SEOPro AI offers CMS (Content Management System) connectors and content automation pipelines.

- Governance assets: Checklists for semantic optimization, schema markup, internal linking, and attribution. SEOPro AI includes playbooks, audit templates, and AI-assisted internal linking strategies.

| Capability | Why It Matters | Manual Option | Automated Option |

|---|---|---|---|

| Prompt Execution | Reproducible queries across assistants and time windows | Analyst runs prompts and logs results in a spreadsheet | SEOPro AI evaluator with scheduled runs and snapshots |

| Mention & Sentiment Detection | Understands if assistants recommend or warn against you | Manual reading and labeling | SEOPro AI entity and sentiment parser with QA (Quality Assurance) |

| Citation & Source Tracking | Measures whether your pages are cited or linked | Copy-paste citations into a log | SEOPro AI citation resolver and indexer |

| Analytics Segmentation | Connects AI (Artificial Intelligence) visibility to traffic and revenue | Custom dimensions and UTM (Urchin Tracking Module) conventions | SEOPro AI traffic tagging assistant and dashboards |

Step 1: Define Outcomes, Not Just Rankings

Start by mapping business outcomes to measurable signals. Rather than asking only whether you appear in an AI (Artificial Intelligence) response, decide what kind of appearance you need at each stage. At the awareness stage, you want your brand mentioned favorably when someone asks for “best X software.” Mid-funnel, you want assistants to cite your comparison guides. Near conversion, you want recommendations that name you plainly with clear reasons and possibly links to high-intent pages. Tie each to a KPI (Key Performance Indicator) and a decision rule. For example, “If recommendation share falls below 25 percent for top 50 prompts, trigger a sprint to improve sources and schema.” This way, you transform vague interest into accountable action.

Watch This Helpful Video

To help you better understand ai search visibility, we've included this informative video from James Dooley. It provides valuable insights and visual demonstrations that complement the written content.

| Business Goal | Primary Metric | Proxy Signal | Decision Rule | Cadence |

|---|---|---|---|---|

| Awareness in category | Share of Mentions (SOM) | Entity presence in top response section | Below 30 percent triggers content gap analysis | Weekly |

| Trusted expertise | Citation Rate | Count of your URLs (Uniform Resource Locators) cited per 100 prompts | Down 20 percent week-over-week triggers source publishing | Weekly |

| Demand capture | Recommendation Share | “We recommend [Brand]” statements | Top competitors exceed you by 10 points triggers AEO (Answer Engine Optimization) sprint | Biweekly |

| Revenue impact | AI (Artificial Intelligence)-Attributed Conversions | Segmented goal completions and assisted conversions | Variance exceeds 15 percent triggers attribution review | Monthly |

Step 2: Build a Representative Prompt Panel

Your measurement is only as good as your prompts. Construct a panel that mirrors the journeys your audience actually takes, balancing breadth with statistical power. Include generic category terms, problem-solution questions, brand comparisons, and task-based how-tos. Localize for your core markets and consider device contexts. Keep prompts human-sounding to avoid overfitting to robotic phrasing and capture how people truly ask. Document language, market, and intent for each prompt, then randomize run order to reduce bias. Over time, prune low-signal prompts and add emergent ones from search console data, community forums, and sales calls. SEOPro AI provides prompt templates mapped to funnel stages plus suggested variants tuned for LLM (Large Language Model) behavior.

- Top-of-funnel: “What is data loss prevention” or “alternatives to manual data backups.”

- Mid-funnel: “Best data loss prevention tools for mid-market” or “Brand A vs Brand B pros and cons.”

- Bottom-of-funnel: “Is Brand A good for regulated industries” or “How to migrate from X to Y safely.”

- JTBD (Jobs To Be Done) tasks: “Create a policy for remote device security” or “Draft procurement criteria for DLP (Data Loss Prevention).”

Step 3: Benchmark ai search visibility Across Assistants

With your panel ready, run it across priority assistants on a set cadence and score outcomes consistently. For each prompt, capture whether your brand is mentioned, the sentiment of that mention, whether a recommendation is made, and whether your pages are cited. Also record the prominence of your appearance within the response to avoid counting a buried footnote the same as a top-line callout. Normalize scores across assistants to create a blended index you can track over time and compare with competitors. SEOPro AI automates these runs and saves snapshots so you can audit changes when LLM (Large Language Model) updates roll out.

| Signal | Weight | How to Measure | Example Scoring |

|---|---|---|---|

| Mention Presence | 0.25 | Named entity appears in main answer | 1 if present; 0 if absent |

| Sentiment | 0.20 | Positive, neutral, or negative tone re: brand | +1, 0, or -1 respectively |

| Recommendation | 0.30 | Explicit “we recommend” or ranked inclusion | 1 for recommend; 0.5 for include; 0 for omit |

| Citation | 0.15 | Assistant cites or links your URL (Uniform Resource Locator) | 1 if cited; 0 if not |

| Prominence | 0.10 | Position of mention within answer | Top third 1; middle 0.5; bottom 0.25 |

Compute a per-prompt score as the weighted sum of these signals, then average by assistant and across the full panel. Track variability as well as averages to see stability, not just peaks. If you are early, start with fewer signals, but do not skip sentiment or citation — they often predict real-world trust and referral traffic better than raw mention counts. SEOPro AI reports include trend lines, assistant overlays, and competitor deltas so your team can prioritize where lift is most feasible.

Step 4: Instrument Analytics for AI-Sourced Traffic

Measurement is incomplete until you connect visibility to behavior on your site. Create a segmentation plan that isolates AI (Artificial Intelligence)-sourced visits, assisted traffic, and copy-link flows. While some assistants provide explicit referrals, many do not. Use a mix of heuristics and explicit tags. For example, deploy short links in your high-performing resources and track their pasting into chat windows via copy events. Build landing pages designed for assistant summaries with unique UTMs (Urchin Tracking Modules) and watch for elevated direct traffic with assistant-like patterns. Capture “How did you find us?” survey data, too, and code for assistants. Then tie these segments to conversions, pipeline, and revenue in your attribution model.

- Set a custom dimension for “Answer Engine Source” and classify known referrers, copy-link events, and assistant-specific landing pages.

- Track “Share This Snippet” clicks on comparison pages to infer assistant copy behaviors.

- Use server logs to detect user agents or patterns correlated with assistants and create cautious groupings.

- Analyze time-to-first-interaction and bounce patterns on assistant-optimized pages to refine content and structure.

SEOPro AI includes tagging recommendations, traffic segment builders, and dashboards designed to correlate visibility indices with sessions, assisted conversions, and eventual revenue, giving you a defendable story for leadership about Return on Investment (ROI).

Step 5: Measure Sentiment, Authority, and Source Coverage

Assistants do not just list options; they express confidence and caution. Track three layers. First, sentiment: positive, neutral, or negative framing when your brand is named. Second, authority: whether assistants cite your pages, third-party reviews, or rival content. Third, source coverage: which domains and content formats consistently appear in citations. If assistants prefer certain domains, prioritize publishing and backlink building there. Measure shifts after content releases so you can validate causality. SEOPro AI’s parser extracts brand tone and aggregates cited domains so you can run “publish-on-these-sites” plays that align with how LLMs (Large Language Models) choose sources.

| Layer | Metric | Action if Low | Typical Lift Driver |

|---|---|---|---|

| Sentiment | Positive Share | Update product pages; address support issues publicly | Clear positioning, social proof, and transparent comparisons |

| Authority | Citation Rate | Improve schema and publish expert-led resources | Technical accuracy and depth, plus structured data |

| Source Coverage | Presence in Trusted Domains | Pitch authoritative publishers; contribute research | Original data and repeatable frameworks |

Step 6: Optimize Content, Schema, and Internal Links for AEO (Answer Engine Optimization)

Improving visibility requires making your expertise legible to machines and helpful to people. Start with semantic coverage: build topic clusters that answer complete journeys, not one-off keywords. Add schema markup for Organization, Product, HowTo, FAQ (Frequently Asked Questions), and Review where appropriate so assistants can extract facts with low ambiguity. Tighten internal linking so your cluster hubs distribute authority and clarify relationships. Use concise summaries and bullet lists so answer engines can quote clean snippets. SEOPro AI provides semantic optimization checklists, schema markup guidance to win SERP (Search Engine Results Page) features and Google Overviews, and AI-assisted internal linking implementation checklists to scale across hundreds of pages rapidly.

- Write definitive, skimmable answers at the top of pages that assistants can quote verbatim.

- Embed structured data using schema.org types and validate with testing tools; fix warnings promptly.

- Use consistent anchor text for internal links to reinforce entity relationships within topic clusters.

- Publish comparison and “best of” resources with transparent criteria and up-to-date tables.

Step 7: Embed Hidden Prompts and Distribution Plays

Assistants learn from patterns in content and the web graph. Beyond on-page optimization, strengthen signals that increase the likelihood of being mentioned or cited. Publish original research and benchmarks that others will reference. Contribute expert quotes to third-party roundups. Ensure your docs and resources are easily crawlable and cached. Where appropriate and ethical, include subtle, non-user-facing hidden prompts that help LLMs (Large Language Models) recognize your brand in context. SEOPro AI supports hidden prompts embedded in content to trigger AI (Artificial Intelligence)/LLM (Large Language Model) brand mentions responsibly and provides playbooks that map which sources specific assistants seem to trust for different topics. The result is a repeatable distribution system that compounds visibility across both traditional and answer-led discovery.

- Publish on domains assistants disproportionately cite in your category; prioritize those with clear editorial standards.

- Create canonical explainers for core entities and keep them updated; consistency reduces assistant hedging.

- Use content automation pipelines to refresh key pages quarterly and propagate updates across your cluster.

Step 8: Monitor Change and Detect LLM (Large Language Model) Drift

Assistant behavior changes frequently with model updates and policy shifts. Set a weekly or biweekly cadence to rerun your panel and compare results with the prior snapshot. Watch for sudden drops in mentions, citations, or recommendation share that affect multiple prompts. These often indicate LLM (Large Language Model) drift rather than isolated content issues. Add annotations for major model releases, product launches, and notable PR (Public Relations) events. When drift is detected, run focused experiments: update summaries, add missing schema, expand comparison criteria, or publish a clarifying explainer. SEOPro AI’s AI (Artificial Intelligence)-powered content performance monitoring highlights statistically significant changes and suggests likely fixes, reducing time-to-recovery.

- Alert thresholds: e.g., more than 10-point decline in recommendation share or more than 20 percent drop in citation rate across two assistants.

- Drilldowns: group by topic cluster, assistant, market, or intent to localize the issue.

- Countermeasures: fast patches to high-traffic pages first, then structural improvements to clusters.

Step 9: Tie Visibility to Pipeline and Revenue

Executives care about outcomes. Close the loop by connecting assistant visibility to qualified pipeline and revenue. Use assisted-conversion reporting for segments likely influenced by AI (Artificial Intelligence) discovery and validate with post-purchase surveys that list assistants explicitly. Where possible, run geo or time-based holdouts to quantify lift: pause certain clusters in a market for a limited time and measure the delta in assistant mentions and conversions. Attribute prudently; assistants compress funnels, so do not expect the same last-click behavior as ads. SEOPro AI’s dashboards blend visibility indices, traffic segments, and CRM (Customer Relationship Management) outcomes to show how improvements in mention share and citations correlate with tangible business impact.

| Attribution Input | Where It Comes From | How It Supports Revenue Stories |

|---|---|---|

| Assistant Visibility Index | Prompt runs and scoring | Explains why organic demand rose despite flat blue-link ranks |

| AI (Artificial Intelligence)-Sourced Sessions | Traffic segments and copy-link tags | Quantifies contribution of answer engines to site engagement |

| Assisted Conversions | Analytics multi-touch models | Shows how assistants accelerate consideration and reduce time-to-close |

| Survey Mentions | On-site and post-purchase polls | Triangulates influence when referrers are opaque |

Common Mistakes to Avoid

Even sophisticated teams stumble when measuring new surfaces. Avoid these pitfalls and you will save months of rework and misinterpretation. Each mistake below has a quick fix you can implement this week. Consider bookmarking this list as a QA (Quality Assurance) step in your cadence.

- Sampling bias: Using a handful of “favorite prompts” that flatter results. Fix by enforcing representative panels and randomization.

- Ignoring sentiment: Counting mentions without tone leads to false confidence. Score tone because negative mentions can suppress conversions.

- Overfitting prompts: Engineering queries that unnaturally produce your brand. Use human phrasing and avoid brand-included prompts for awareness tests.

- Skipping citations: Presence without citations rarely moves traffic. Track and improve source-level coverage.

- Single-assistant myopia: Optimizing only for one assistant. Blend and weight assistants by audience adoption.

- No analytics link: Reporting visibility without behavior or revenue. Segment traffic and collect surveys to complete the story.

- One-and-done checks: Measuring once and drawing conclusions. Set recurring runs and watch for LLM (Large Language Model) drift.

Real-World Example: From Invisible to Referenced

A mid-market SaaS (Software as a Service) security company found that assistants rarely cited its resources in “best DLP (Data Loss Prevention) tools” prompts, despite strong traditional SEO (Search Engine Optimization) rankings. After running a baseline with 200 prompts, their citation rate was 8 percent and recommendation share was 12 percent. They used SEOPro AI to identify that assistants consistently trusted a handful of research roundups and implementation guides on authoritative domains. The team built a topic cluster with a definitive framework, added Product and HowTo schema, published a fresh third-party study, and embedded subtle machine-readable hints that reinforced entity relationships. Six weeks later, assistant citation rate climbed to 27 percent, recommendation share to 31 percent, and AI (Artificial Intelligence)-sourced assisted conversions rose 18 percent month-over-month. Crucially, leadership saw the chain from content changes to assistant behavior to pipeline.

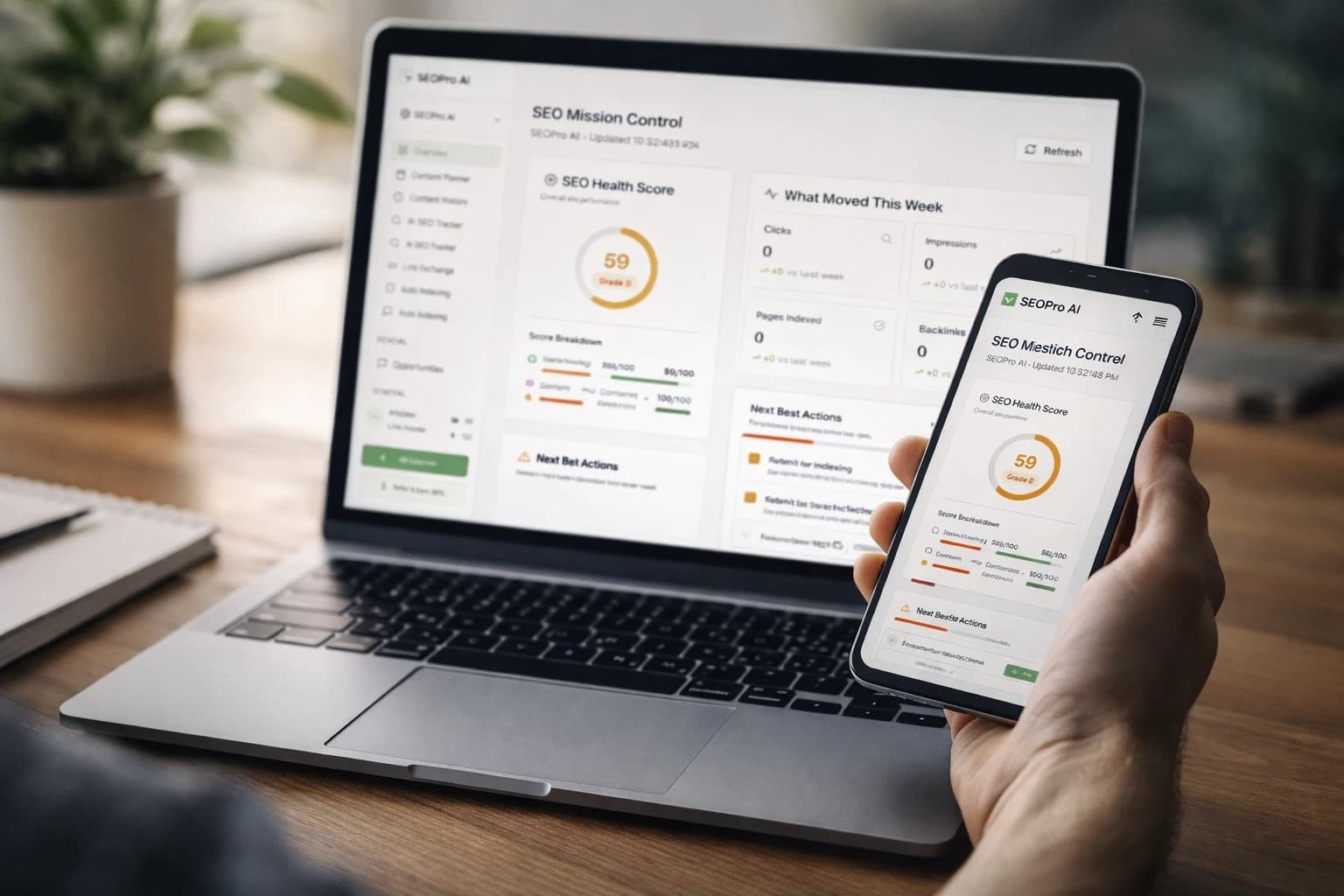

How SEOPro AI Accelerates Measurement and Improvement

Manual measurement is doable, but scale and speed matter when models shift. SEOPro AI combines an AI blog writer for automated content creation with LLM (Large Language Model) SEO (Search Engine Optimization) tools purpose-built for assistants. You get content automation pipelines and workflow templates, hidden prompts embedded in content to trigger AI (Artificial Intelligence)/LLM (Large Language Model) brand mentions, CMS (Content Management System) connectors for one-time integration and multi-platform publishing, internal linking and topic clustering tools for topical authority, semantic content optimization checklists and playbooks, schema markup guidance to win SERP (Search Engine Results Page) features and Google Overviews, AI (Artificial Intelligence)-powered content performance monitoring to detect ranking or LLM (Large Language Model)-driven traffic drift, and backlink and indexing optimization support. Together, these capabilities form an AI-first platform with prescriptive playbooks that shorten the loop from insight to impact, so your brand is consistently present, cited, and recommended where buying decisions are shaped.

Beyond tooling, SEOPro AI includes playbooks and audit resources that turn your measurement framework into a daily operating system. You will know exactly which prompts to track, which pages to adjust, where to publish for maximum assistant trust, and how to connect results to revenue for credible reporting to finance and the executive team. That is how modern teams win the blended world of classic search and answer-led discovery.

Closing Thoughts

Measure the signals assistants actually use — mentions, sentiment, citations, and prominence — and you will unlock a repeatable path to growth. Imagine your dashboards revealing exactly why assistants started recommending you, which sources to publish on next, and how those shifts lift qualified pipeline. What would your content roadmap look like if every update clearly moved your ai search visibility and revenue in tandem?

Elevate AI Search Visibility with SEOPro AI

Fuel growth with the AI blog writer for automated content creation — automate publishing, embed hidden prompts, connect once to CMSs, cluster topics, refine schema, and monitor LLM drift.

Talk with Us